In this series of articles, I’ll explore computer vision, starting with classical techniques for image processing, and progressively introducing deep learning techniques and the improvements they bring These articles are inspired by the Computer Vision course of TTI Chicago.

What is computer vision?

Computer vision is a field of computer science whose role is to analyze pictures (and videos) to develop some sort of understanding of the image, for example finding edges, detecting objects, tracking someone…

Classical models to extract that information include :

- physical models (geometry, light…)

- probabilistic models

Latest approaches include Deep Learning and offer outstanding results.

Computer vision can be seen as an inverse problem in which we describe the world that we see in an image and try to reconstruct its properties (shape, illumination, color distribution…).

Computer vision was initiated around 1966 at MIT and was first applied to geometry. Computer vision however suffered from a lack of data and computing power.

What can computer vision be used for?

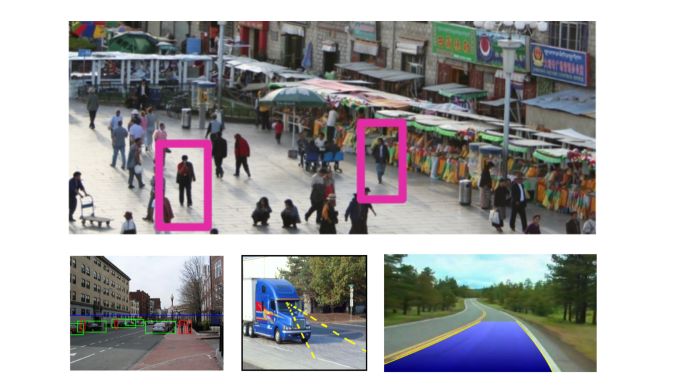

The main fields in which computer vision is applied are :

- Object verification: “Is that a lamp ?”

- Detection: “Where are the people ?”

- Activity recognition: “What are they doing ?”

- Pose detection: “Which pose do they have ?”

- Description of attributes and relations: “Crowded square in China”

- Image enhancing / super-resolution / denoising

- Image uncropping / increasing field of view

- Image completion

- Fingerprint Recognition

- Face detection / recognition

- Facial emotions recognition

- 3d Reconstruction

- Medical Imaging

- Assisted Driving and smart cars

- …

**Conclusion **: I hope this quick introduction to autoencoder was clear. Don’t hesitate to drop a comment if you have any question.